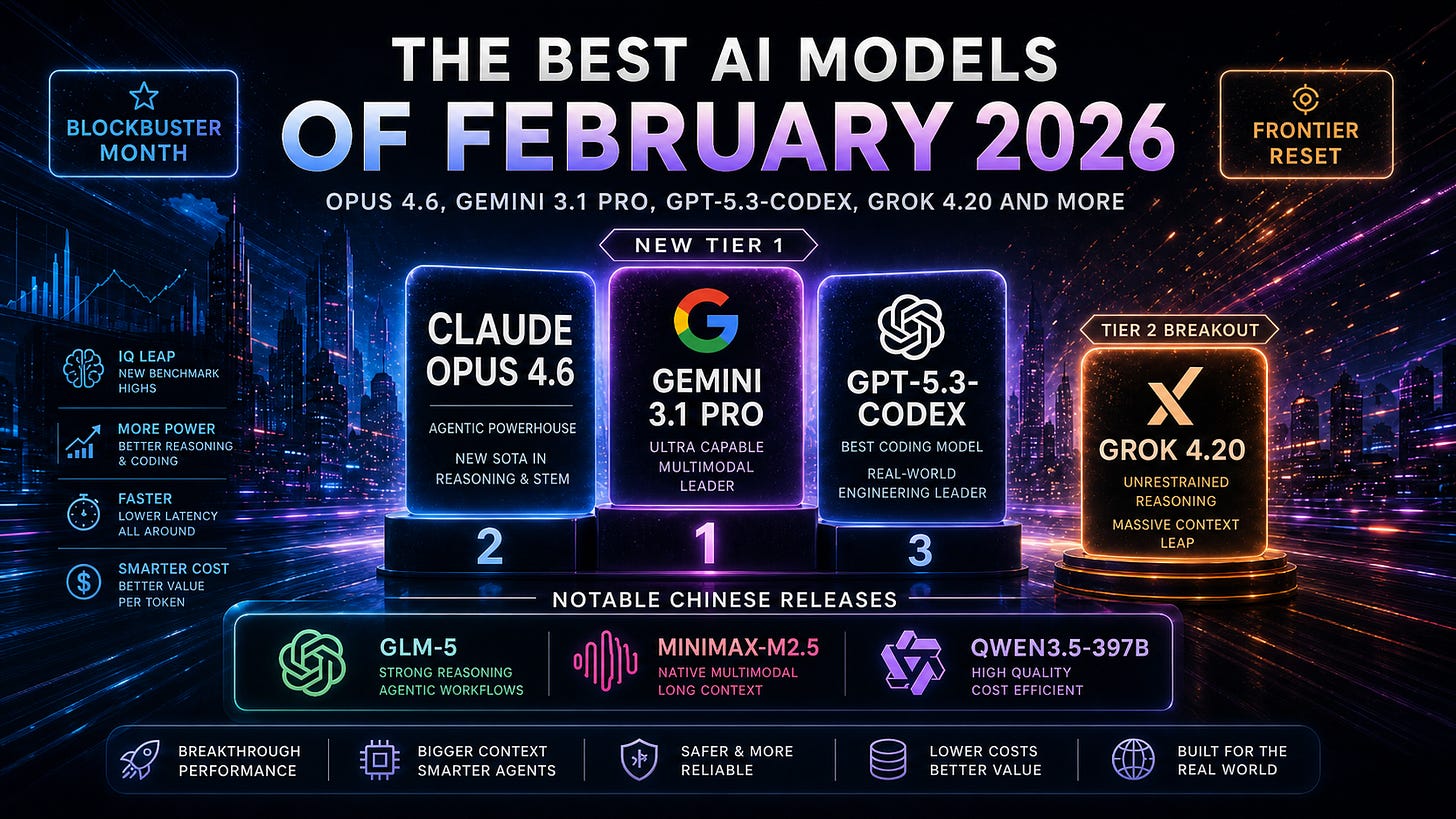

The Best AI Models of February 2026

Gemini 3.1 Pro and Opus 4.6 reset Tier 1, while GPT-5.3-Codex made OpenAI harder to compare

February was a blockbuster month for AI model releases.

January was mostly about open-weight models becoming more useful underneath the frontier. February was different. The top of the leaderboard moved.

Anthropic released Opus 4.6. OpenAI released GPT-5.3-Codex. Google released Gemini 3.1 Pro. xAI pushed Grok 4.20 into public beta. And the Chinese labs shipped another strong wave: GLM-5, MiniMax-M2.5, and Qwen3.5-397B.

The simple story is that Gemini 3.1 Pro and Opus 4.6 became the new all-around state-of-the-art models.

The more interesting story is that February did not give us one clean winner. It gave us three different frontier claims.

Google had the best broad benchmark story with Gemini 3.1 Pro.

Anthropic had the best long-horizon professional workflow story with Opus 4.6.

OpenAI had the most awkward model to rank: GPT-5.3-Codex, a model that looks like a clear step up from GPT-5.2 in coding and agentic computer work, but is not quite the same thing as a general-purpose GPT-5.3.

That made February more interesting than a normal leaderboard update. The frontier moved, but it also got messier.

February 2026 model releases

February had two clear Tier 1 general-model launches, one major OpenAI specialist launch, one important xAI beta, and a strong Chinese model wave.

February 5: Anthropic released Claude Opus 4.6

Opus 4.6 improved on Opus 4.5 in coding, long-running agentic tasks, large-codebase reliability, debugging, code review, and long-context work. Anthropic also introduced a 1M token context window in beta for an Opus-class model and emphasized everyday professional tasks like financial analysis, research, documents, spreadsheets, and presentations.

February 5: OpenAI released GPT-5.3-Codex

GPT-5.3-Codex was OpenAI’s biggest February move, but not a normal flagship release. OpenAI described it as its most capable agentic coding model to date, combining the Codex and GPT-5 training stacks, advancing both GPT-5.2-Codex coding performance and GPT-5.2 professional reasoning, while running about 25% faster.

February 12: Z.ai released GLM-5

GLM-5 was designed for complex system engineering and long-range agent tasks. Z.ai framed it as a shift “from coding to engineering,” with stronger deep reasoning in backend architecture, complex algorithms, and stubborn bug fixing, plus DeepSeek Sparse Attention for token efficiency.

February 12: MiniMax released MiniMax-M2.5

MiniMax-M2.5 pushed heavily on coding, agentic tool use, search, office work, and economically valuable tasks. MiniMax reported 80.2% on SWE-Bench Verified, 51.3% on Multi-SWE-Bench, and 76.3% on BrowseComp with context management, while also claiming the model could run continuously for $1/hour at 100 tokens per second.

Mid-February: xAI pushed Grok 4.20 into public beta

Grok 4.20 is harder to source cleanly than the OpenAI, Anthropic, and Google releases. It appeared in public beta in February, while xAI’s developer release notes list Grok 4.20 and Grok 4.20 Multi-agent as live on March 10. So I would treat it as a February capability signal but not as clean a product release as Opus 4.6 or Gemini 3.1 Pro.

February 16: Alibaba released Qwen3.5

Alibaba unveiled Qwen3.5 for the “agentic AI era,” with Reuters reporting claims of lower cost, better large-workload processing, and visual agentic capabilities across mobile and desktop apps. The open-weight Qwen3.5-397B-A17B model uses 397B total parameters with 17B activated and supports 262K native context, extensible to roughly 1M tokens.

February 19: Google released Gemini 3.1 Pro

Gemini 3.1 Pro was the biggest all-around release of the month. Google framed it as upgraded core intelligence for complex tasks, rolling out across the Gemini API, Vertex AI, Gemini app, NotebookLM, Google AI Studio, Antigravity, Gemini CLI, and Android Studio. Google also reported a verified 77.1% score on ARC-AGI-2, more than double Gemini 3 Pro’s reasoning performance.

That is a lot for one month.

The simple release-count story understates it. February was not just busy. It changed the top tier.

The new Tier 1

By the end of February, AI IQ’s Tier 1 looked like this:

Gemini 3.1 Pro

Claude Opus 4.6

GPT-5.3-Codex

That ranking needs a caveat.

Gemini 3.1 Pro and Opus 4.6 are clean all-around models. They are easy to compare against GPT-5.2, Gemini 3 Pro, and Opus 4.5.

GPT-5.3-Codex is different. It is clearly stronger than GPT-5.2 in important ways, especially in coding-agent work. But OpenAI did not release a general-purpose GPT-5.3 in February, and GPT-5.3-Codex did not get the same kind of broad benchmark coverage that GPT-5.2 had. So it belongs in Tier 1, but with an asterisk: it is a frontier model, but not a normal flagship model.

That distinction matters because model selection is no longer a one-column leaderboard problem.

For broad intelligence, Gemini 3.1 Pro had the strongest February claim.

For long-running professional work, Opus 4.6 had the strongest workflow claim.

For agentic coding and computer work, GPT-5.3-Codex looked like OpenAI’s most important model.

The best model depended more than usual on what kind of work you meant.

Gemini 3.1 Pro: the broad benchmark leader

Gemini 3.1 Pro was the cleanest all-around winner of February.

Google did not frame it as a minor Gemini 3 patch. It framed it as the upgraded core intelligence behind recent Deep Think progress, designed for tasks where “a simple answer isn’t enough.” The launch emphasized complex reasoning, data synthesis, visual explanations, code-based animation, API integration, and agentic development workflows.

The standout number was ARC-AGI-2. Google reported Gemini 3.1 Pro at 77.1% verified on ARC-AGI-2, more than double Gemini 3 Pro. That matters because ARC-AGI-2 is one of the better tests for novel abstract reasoning — the kind of problem-solving that is harder to explain away with benchmark contamination or memorized examples.

On AI IQ, that shows up clearly. Gemini 3.1 Pro sits at or near the top overall, and it has one of the strongest profiles across abstract, programmatic, and academic reasoning.

It also benefits from Google’s distribution. Gemini 3.1 Pro was not just a model-card release. It rolled into the Gemini app, NotebookLM, Vertex AI, Gemini Enterprise, Google AI Studio, Antigravity, Gemini CLI, and Android Studio. That matters because the model is not only competing in the lab; it is being pushed into the places where people actually work.

The weakness is the usual Gemini caveat: benchmarks and product feel have not always lined up perfectly. Google’s best models can look incredible on hard evals but still feel uneven in day-to-day chat or agent workflows. Gemini 3.1 Pro narrows that gap, but it does not erase the question.

Still, by the end of February, Gemini 3.1 Pro had the strongest claim to “best overall model.”

Opus 4.6: the professional workflow model

Opus 4.6’s case is different from Gemini’s.

Gemini 3.1 Pro had the cleaner broad benchmark story. Opus 4.6 had the better “give it a real project and let it work” story.

Anthropic emphasized coding, planning, long-running agentic tasks, large-codebase reliability, debugging, code review, financial analysis, research, and document/spreadsheet/presentation work. The model also got a 1M token context window in beta, which is especially relevant for enterprise workflows where the model has to reason over large document sets, repositories, or multi-file projects.

Anthropic also reported strong results on GDPval-AA, BrowseComp, long-context retrieval, cybersecurity, life sciences, and agentic coding. One particularly useful detail: Opus 4.6 scored 76% on an 8-needle 1M-context MRCR v2 task, compared with 18.5% for Sonnet 4.5. That is the kind of long-context retrieval result that matters in real work, not just benchmark theater.

On AI IQ, Opus 4.6 belongs in Tier 1 because it is strong across the board. But its practical advantage may be even more important than its composite score.

Opus 4.6 is the model I would most want to test for long-running tasks where the failure modes are subtle: drifting from the goal, missing buried constraints, over-editing code, ignoring project structure, or producing something polished but not quite right.

That is not the same as saying it beats Gemini 3.1 Pro on every dimension. It does not.

It is saying that “best benchmark model” and “most trustworthy model for messy professional work” are no longer obviously the same thing.

GPT-5.3-Codex: the hardest model to rank

GPT-5.3-Codex is the most interesting February release because it created a ranking problem.

On one hand, it looks like a major step forward. OpenAI says GPT-5.3-Codex combines the Codex and GPT-5 training stacks, advances both GPT-5.2-Codex and GPT-5.2, runs about 25% faster, and sets new highs on SWE-Bench Pro and Terminal-Bench. OpenAI also describes it as moving Codex beyond writing code toward doing end-to-end work on a computer.

On the other hand, it is not GPT-5.3.

That sounds like a pedantic distinction, but it matters. GPT-5.3-Codex is clearly a frontier agentic coding model. It may also be a very strong general professional-work model. But because OpenAI did not release a normal general-purpose GPT-5.3, we have less clean evidence for where the underlying GPT line stood in February.

That makes GPT-5.3-Codex feel like a partial preview of OpenAI’s next frontier rather than the next clean GPT flagship.

For developers, this may not matter. If your work is coding, tool use, repo search, debugging, terminal work, and computer operation, GPT-5.3-Codex is one of the first models to test.

For model rankings, it matters a lot. A coding-specialized frontier model can beat previous GPT releases on many important tasks without telling us exactly how OpenAI’s general-purpose model compares to Gemini 3.1 Pro or Opus 4.6.

That is why GPT-5.3-Codex belongs in Tier 1, but with more uncertainty than the other two February leaders.

Grok 4.20: xAI is still behind, but gaining ground

Grok 4.20 was not the cleanest February release, and it was not Tier 1 on AI IQ.

But it mattered.

xAI’s previous frontier position had been drifting. Grok 4 was useful, but it did not really put xAI in the same all-around conversation as OpenAI, Anthropic, and Google. Grok 4.20 changed that somewhat.

The model appeared in public beta in February, with coverage emphasizing a major step up in capability and daily bug-fix iteration, while xAI’s own developer notes later listed Grok 4.20 and Grok 4.20 Multi-agent as live on March 10. So this is not as clean as saying “xAI officially released a new flagship on February X.” It is better to say: February is when Grok 4.20 became visible as the next serious Grok checkpoint.

On AI IQ, Grok 4.20 sits in Tier 2. That feels right.

It is not yet Gemini 3.1 Pro, Opus 4.6, or GPT-5.3-Codex. But the jump from Grok 4 to Grok 4.20 suggests xAI is gaining ground rather than falling farther behind.

That is the important part. The fourth US lab is still in fourth place, but it is not irrelevant.

The Chinese wave: GLM-5, MiniMax-M2.5, and Qwen3.5

February also had a strong Chinese model wave.

None of GLM-5, MiniMax-M2.5, or Qwen3.5-397B became Tier 1 on AI IQ. But all three pushed the same broader pattern: Chinese labs are not merely trying to top a single benchmark. They are building models around agentic deployment, coding, cost, long context, and broad real-world utility.

GLM-5 was the strongest pure “engineering agent” story. Z.ai explicitly framed it as a move from coding to engineering, with an emphasis on backend architecture, complex algorithms, long-range agent tasks, and stubborn bug fixing. It also integrated DeepSeek Sparse Attention for better token efficiency while preserving long-context quality.

MiniMax-M2.5 was the cost-performance story. MiniMax claimed strong coding, tool-use, search, and office-work results, plus much lower cost for continuous agentic operation. The key line is not the SWE-Bench score. It is the $1/hour claim at 100 tokens per second. If that holds up in real workflows, it changes what kinds of agentic applications are economically reasonable.

Qwen3.5-397B was the architecture and deployment story. The open-weight version uses a 397B-total / 17B-active MoE design, combines Gated Delta Networks with sparse MoE, supports a large native context window, and is built as a native vision-language model. Reuters also reported Alibaba’s claim that Qwen3.5 was 60% cheaper and eight times better at processing large workloads than its predecessor.

The US labs still owned the top of the leaderboard in February.

But the Chinese labs continued to make Tier 2 much more usable.

That matters because most real AI usage will not be one-off frontier prompts. It will be millions of calls across coding agents, office agents, search agents, customer-support workflows, document systems, and internal automation. In that world, a model does not have to be the smartest model in the world to be economically important.

Updated model rankings

AI IQ’s February ranking can be summarized like this.

Tier 1

Gemini 3.1 Pro

The strongest overall model by the end of February. Best broad benchmark story, especially on abstract reasoning and all-around capability.

Claude Opus 4.6

The strongest long-running professional workflow model. Excellent for agentic work, coding, large context, research, and document/spreadsheet-heavy tasks.

GPT-5.3-Codex

A Tier 1 coding-agent model with unusually strong professional-work capabilities, but harder to compare because OpenAI did not release a general GPT-5.3.

Tier 2

Grok 4.20

A major step up for xAI, but still below the top three US labs overall.

Kimi K2.5

Still highly relevant from January as one of the strongest open-weight agentic models.

GLM-5

The strongest February Chinese release on engineering-agent positioning.

DeepSeek-V3.2

Still an important open-weight baseline even without being the month’s new headline.

MiniMax-M2.5

A cost-performance standout for coding, search, office work, and agentic tasks.

Qwen3.5-397B

An efficient open-weight native vision-language MoE model with a strong deployment story.

This is a more complicated ranking than January’s.

January’s story was: GPT-5.2 still leads, but open-weight models are becoming useful.

February’s story was: the top tier changed, but model choice became less obvious.

Dimension-by-dimension read

AI IQ evaluates models across Abstract Reasoning, Mathematical Reasoning, Programmatic Reasoning, and Academic Reasoning. It also compresses easier or more gameable benchmarks so saturated tests cannot dominate the composite score. That is especially important in February because the new releases have very different shapes.

Best overall IQ: Gemini 3.1 Pro

Gemini 3.1 Pro had the strongest overall February profile.

Its advantage came from breadth. It was not just a coding model or a math model. It was strong across abstract reasoning, programmatic reasoning, academic reasoning, multimodal problem-solving, and tool-heavy workflows.

The most important public signal was Google’s ARC-AGI-2 score. A 77.1% verified result on a hard abstract reasoning benchmark is not a normal incremental upgrade. It is the kind of result that changes the top of the ranking.

Best EQ: Opus 4.6

Opus 4.6 had the strongest EQ and professional-collaboration profile.

EQ in AI IQ is not just friendliness. It is a proxy for conversational judgment, calibration, tact, user alignment, and the ability to work well in high-context settings. That matters more as models move from answering questions to doing work with people.

Opus 4.6’s strength is that it does not just score well; it feels designed for handoff. Give it a complex project, a large context, and a vague but real professional goal, and it is one of the models most likely to stay useful without constant correction.

Best abstract reasoning: Gemini 3.1 Pro

Gemini 3.1 Pro was the February abstract reasoning leader.

That is the clearest part of its case. Google’s ARC-AGI-2 result was a major jump from Gemini 3 Pro, and AI IQ’s abstract reasoning dimension puts real weight on ARC-style tasks because they test novel pattern-solving more directly than knowledge-heavy benchmarks.

Best mathematical reasoning: GPT-5.3-Codex

GPT-5.3-Codex had the strongest February claim on math-adjacent reasoning, especially where math overlaps with formal reasoning, code, and tool-based problem-solving.

This is one of the places where the model’s specialization matters. A Codex model that can reason through repositories, run terminal workflows, and verify intermediate results is not just a coding autocomplete system. It starts to become a practical reasoning engine for technical work.

The caveat is coverage. GPT-5.3-Codex did not get the same clean all-domain benchmark treatment as a normal GPT flagship. So I would call it the strongest technical-reasoning model of February, but not use it alone to infer the full state of OpenAI’s general GPT line.

Best programmatic reasoning: Gemini 3.1 Pro overall; GPT-5.3-Codex for coding agents

This is the most nuanced category.

On AI IQ’s broader programmatic dimension, Gemini 3.1 Pro had the strongest all-around position. But GPT-5.3-Codex was the most important coding-agent release of the month.

That distinction matters. Programmatic reasoning is broader than patching code. AI IQ includes Terminal-Bench, SWE-Bench, and SciCode, with compression applied to more gameable benchmarks. So a model can be the best “coding agent” in a product sense while another model has the strongest overall programmatic IQ profile.

For coding-agent products, GPT-5.3-Codex should be near the top of the eval list. For broad technical reasoning across coding, science, terminal work, and structured problem-solving, Gemini 3.1 Pro still has the best February case.

Best academic reasoning: Gemini 3.1 Pro and Opus 4.6

Academic reasoning was close between Gemini 3.1 Pro and Opus 4.6.

Gemini 3.1 Pro had the stronger broad-reasoning profile. Opus 4.6 had the stronger professional-knowledge-work story, especially on long-context retrieval, GDPval-AA, BrowseComp, finance, research, and expert workflows.

The practical difference is this: Gemini 3.1 Pro looks like the better academic benchmark model; Opus 4.6 looks like the model you would trust with a long, messy professional research task.

Both belong in Tier 1.

Cost-performance: the gap below the frontier keeps narrowing

February also made cost-performance more important.

The top three models — Gemini 3.1 Pro, Opus 4.6, and GPT-5.3-Codex — are the models to test when quality matters most. But most production AI systems should not route every call to the most expensive model.

That is where MiniMax-M2.5, GLM-5, Qwen3.5-397B, Kimi K2.5, and DeepSeek-V3.2 matter.

MiniMax-M2.5 is the clearest example. A model that performs well on coding, search, tool use, and office work while costing roughly $1/hour to run continuously at 100 tokens per second is not just a cheaper benchmark competitor. It changes the economics of background agents, always-on coding assistants, and multi-step office automation.

Qwen3.5-397B is another example. It does not beat Gemini 3.1 Pro overall, but a 397B-total / 17B-active native vision-language MoE model with long context and open weights is exactly the kind of model teams will want to experiment with for lower-cost multimodal agents.

GLM-5 sits in the same category. Its positioning around system engineering, long-range agent tasks, and token efficiency makes it relevant for teams trying to route complex coding and engineering work without paying top-tier closed-model prices every time.

The best model stack after February probably looked something like this:

Use Gemini 3.1 Pro for the strongest broad reasoning and abstract problem-solving.

Use Opus 4.6 for long-running professional workflows, research, documents, spreadsheets, and coding tasks where trust and context retention matter.

Use GPT-5.3-Codex for serious coding-agent workflows, terminal tasks, repo work, and computer-use-heavy execution.

Use MiniMax-M2.5, GLM-5, Qwen3.5-397B, Kimi K2.5, or DeepSeek-V3.2 when cost, openness, latency, or deployment control matter more than squeezing out the last few points of frontier capability.

Use Grok 4.20 if you are specifically evaluating xAI’s ecosystem or want to see how quickly xAI is improving.

The better setup is not one model. It is routing.

What changed from January to February

January was mostly about the floor rising.

February was about the ceiling moving.

In January, GPT-5.2 and Gemini 3 Pro still sat at the top, while Kimi K2.5, GLM-4.7, MiniMax-M2.1, and GLM-4.7-Flash made the lower tiers more useful.

In February, the top changed. Gemini 3.1 Pro and Opus 4.6 displaced the old frontier. GPT-5.3-Codex gave OpenAI a stronger model, but in a specialist package. Grok 4.20 made xAI relevant again in the Tier 2 conversation. And the Chinese labs kept filling in the cost-performance layer.

That is why February mattered.

It was not just a month with more releases. It changed the shape of the model market.

What to watch next

The first thing to watch is OpenAI. GPT-5.3-Codex is clearly important, but it leaves an obvious question: where is the general-purpose GPT-5.3? If OpenAI folds Codex-level coding into a broader GPT model, the top of the leaderboard could move again quickly.

The second thing to watch is whether Gemini 3.1 Pro’s benchmark strength translates into everyday agentic reliability. The ARC-AGI-2 result is excellent. The practical question is whether developers and professionals feel the same jump in real workflows.

The third thing to watch is Opus 4.6 adoption in enterprise agents. Anthropic’s model is extremely well-positioned for long-context, high-trust, professional work. If businesses increasingly evaluate models on end-to-end task completion instead of chat quality, Opus 4.6 may end up being more important than its raw ranking suggests.

The fourth thing to watch is Grok 4.20. xAI is still behind the top three US labs, but the improvement rate matters. If Grok 4.20’s gains carry into a cleaner API release and stronger benchmark coverage, xAI becomes harder to dismiss.

The fifth thing to watch is the Chinese cost-performance tier. GLM-5, MiniMax-M2.5, and Qwen3.5-397B are not Tier 1 overall, but they are exactly the kinds of models that can win production routing decisions.

Bottom line

February 2026 was one of the most important AI model months so far.

Gemini 3.1 Pro became the strongest overall model in AI IQ’s February ranking.

Opus 4.6 became the strongest long-running professional workflow model.

GPT-5.3-Codex became OpenAI’s most important agentic coding model, but it was harder to compare because OpenAI did not release a general GPT-5.3.

Grok 4.20 brought xAI back into the conversation, even though it still sat below the top frontier labs.

GLM-5, MiniMax-M2.5, and Qwen3.5-397B made Tier 2 more serious, especially for coding, agents, cost, and deployment control.

The practical takeaway is that model selection got harder.

In January, you could mostly say GPT-5.2 was still the default, while open-weight models were becoming useful.

After February, that was no longer enough.

Use Gemini 3.1 Pro when you want the strongest broad reasoning.

Use Opus 4.6 when the work is long, messy, and professional.

Use GPT-5.3-Codex when the task is coding-agent work.

Use the best Tier 2 models when scale, openness, or cost matters more than the last few points of capability.

February did not settle the race.

It made the routing problem real.