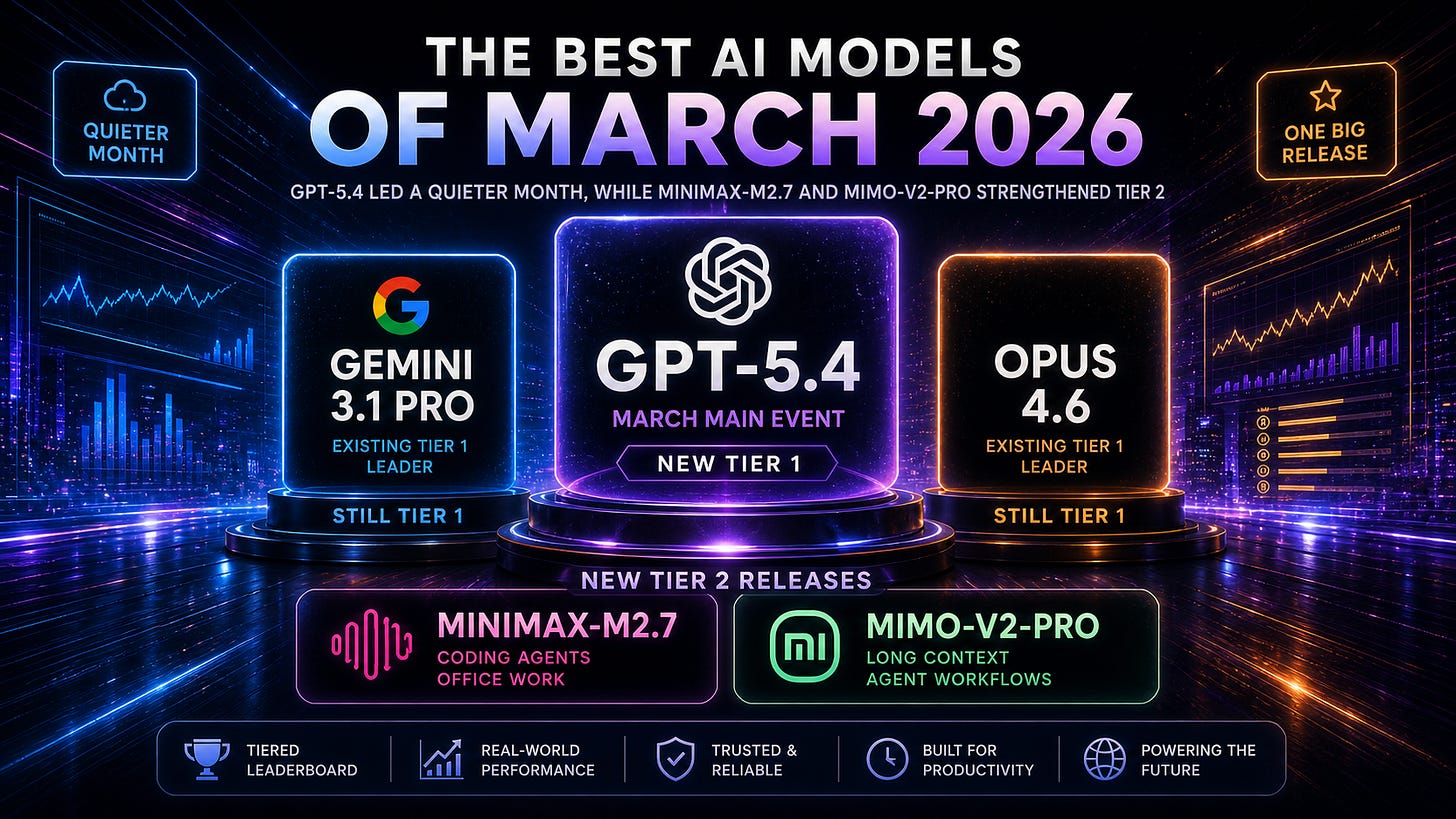

The Best AI Models of March 2026

GPT-5.4 moved the frontier, while MiniMax and Xiaomi made Tier 2 more interesting

March was a slower month for AI model releases than February.

There was no new Claude. No new Gemini. No new Grok. Most of the major US and Chinese labs were between release cycles.

But slow does not mean boring.

OpenAI released GPT-5.4 on March 5, and it immediately became one of the best models in the world. It did not completely erase Gemini 3.1 Pro or Opus 4.6, but it pushed the frontier forward in a very specific direction: real professional work. Spreadsheets, documents, presentations, computer use, coding agents, tool use, long-context work, and fewer factual errors.

Then, on March 18, two Chinese labs released strong Tier 2 models: MiniMax-M2.7 and Xiaomi MiMo-V2-Pro. Neither model took the overall crown, but both made the cost-performance and agentic-deployment tier more competitive.

So March was not a broad release wave.

It was a month with one main event and two important follow-ups.

The main event was GPT-5.4.

The follow-ups were MiniMax-M2.7 and MiMo-V2-Pro showing that the layer below the frontier is still getting better.

March 2026 model releases

March had three releases that matter for AI IQ’s model rankings, plus one smaller OpenAI release that matters for routing.

March 5: OpenAI released GPT-5.4

GPT-5.4 was the clear headline release of the month. OpenAI positioned it as its strongest mainline reasoning model, with improvements across professional work, coding, computer use, tool use, academic reasoning, factuality, and long-context tasks. OpenAI also said GPT-5.4 was the first mainline reasoning model to incorporate the frontier coding capabilities of GPT-5.3-Codex.

March 17: OpenAI released GPT-5.4 mini and GPT-5.4 nano

These were not Tier 1 models, but they matter for production systems. GPT-5.4 mini and nano were designed for faster, cheaper, high-volume workloads, with OpenAI specifically emphasizing coding subagents, classification, data extraction, ranking, multimodal use, and low-latency tool workflows.

March 18: MiniMax released MiniMax-M2.7

MiniMax-M2.7 was the most interesting non-OpenAI release of March. MiniMax described it as its first model to participate deeply in its own development cycle, with strong agent-harness capabilities, real-world software engineering results, office-work performance, and native Agent Teams. It scored 56.22% on SWE-Pro, 55.6% on VIBE-Pro, and 57.0% on Terminal Bench 2 according to MiniMax’s launch post.

March 18: Xiaomi released MiMo-V2-Pro

MiMo-V2-Pro was Xiaomi’s flagship agent model, built for real-world agentic workloads. Xiaomi described it as a trillion-parameter model with 42B active parameters, support for up to 1M-token context, and strong agent benchmark performance, including #3 globally on PinchBench and ClawEval in Xiaomi’s published comparisons.

That is a smaller calendar than February, but the model-market impact was still real. GPT-5.4 became a Tier 1 model immediately, and MiniMax-M2.7 and MiMo-V2-Pro both earned Tier 2 positions.

The new March ranking

By the end of March, AI IQ’s top tiers looked like this.

Tier 1

Gemini 3.1 Pro

Still the overall leader in AI IQ’s March ranking. Google did not release a new model in March, but Gemini 3.1 Pro remained the model to beat, especially on broad reasoning and programmatic capability.

GPT-5.4

The new March release and the main event of the month. GPT-5.4 moved OpenAI back into the top cluster and became one of the strongest all-around models, especially for professional work, computer use, coding, tool use, and factuality.

Claude Opus 4.6

Still Tier 1 despite no March release. Opus 4.6 remained one of the best models for long-running professional workflows, high-context collaboration, coding agents, and EQ-heavy work.

Tier 2

Grok 4.20

Still xAI’s strongest model in the March window, but below the top three.

Kimi K2.5

Still highly relevant as an open-weight, multimodal, agentic model from January.

GLM-5

Still one of the stronger Chinese frontier-adjacent models, especially for engineering-agent workflows.

DeepSeek-V3.2

Still an important open-weight baseline.

MiniMax-M2.7

New in March, and the strongest MiniMax model yet. It is especially interesting for coding agents, production debugging, office work, and multi-agent workflows.

Qwen3.5-397B

Still a strong open-weight MoE model from February.

MiMo-V2-Pro

New in March, and one of the more interesting Chinese agent models because of its 1M context, 42B-active architecture, API pricing, and OpenClaw-style agent positioning.

The short version: March did not reshuffle the entire leaderboard. It inserted GPT-5.4 into Tier 1 and made Tier 2 more competitive.

GPT-5.4: the professional-work model

GPT-5.4 is the March release that matters most.

OpenAI’s launch post was not framed around chat quality or one narrow benchmark. It was framed around professional work: spreadsheets, presentations, documents, legal analysis, financial modeling, coding, computer use, and tool-heavy agentic workflows.

That is a meaningful shift. The highest-value AI workloads are increasingly not “answer this question.” They are “do this work.”

OpenAI reported that GPT-5.4 scored 87.3% on an internal investment-banking-style spreadsheet modeling benchmark, compared with 68.4% for GPT-5.2. It also said human raters preferred GPT-5.4 presentations over GPT-5.2 presentations 68.0% of the time.

The factuality improvement is also important. OpenAI said GPT-5.4 was its most factual model yet, with individual claims 33% less likely to be false and full responses 18% less likely to contain any errors relative to GPT-5.2, measured on de-identified prompts where users had flagged factual errors.

That is the core of GPT-5.4’s case. It is not just smarter in an abstract sense. It is better at the kinds of work people actually pay AI systems to do.

GPT-5.4’s biggest leap may be computer use

The most striking GPT-5.4 result is not a math score or a coding score.

It is computer use.

OpenAI described GPT-5.4 as its first general-purpose model with native computer-use capabilities. On OSWorld-Verified, GPT-5.4 scored 75.0%, compared with 47.3% for GPT-5.2, and above the human performance baseline of 72.4%.

That matters because computer use is one of the bridges between “model that answers questions” and “model that can operate software.”

A model that can reason through screenshots, issue mouse and keyboard actions, use browser environments, and operate tools reliably is much closer to being useful in the messy middle of knowledge work. Not just writing code. Not just summarizing. Actually moving through software systems.

GPT-5.4 also scored 67.3% on WebArena-Verified and 92.8% on Online-Mind2Web using screenshot-based observations alone, according to OpenAI.

This is why GPT-5.4 feels different from a normal incremental model release. It is not just another reasoning bump. It is a stronger base for software-operating agents.

GPT-5.4 vs Gemini 3.1 Pro vs Opus 4.6

The March Tier 1 is not cleanly ordered by one simple criterion.

Gemini 3.1 Pro still had the strongest overall AI IQ position by the end of March. It remained the best broad benchmark model in the March window, especially on abstract and programmatic reasoning.

GPT-5.4 was the strongest new release and the biggest March mover. It made OpenAI much more competitive with Gemini 3.1 Pro and Opus 4.6, especially on professional work, computer use, coding, tool use, factuality, and documents.

Opus 4.6 remained the model with the strongest claim for long-running, high-context professional workflows and EQ-heavy work.

The practical distinction is:

Use Gemini 3.1 Pro when you want the strongest broad benchmark profile.

Use GPT-5.4 when you care about professional work, computer use, factuality, coding, and tool-heavy agents.

Use Opus 4.6 when the task is long, messy, collaborative, and sensitive to workflow quality.

That is the uncomfortable reality of March: there was no single model that made the other two irrelevant.

GPT-5.4 was excellent. But March still ended with a three-model Tier 1.

GPT-5.4 mini and nano: the routing angle

GPT-5.4 mini and nano were not the headline models, but they may matter a lot in production.

OpenAI released them on March 17 as faster, cheaper models designed for high-volume workloads. GPT-5.4 mini supports text and image inputs, tool use, function calling, web search, file search, computer use, and skills, with a 400K context window. It costs $0.75 per million input tokens and $4.50 per million output tokens. GPT-5.4 nano costs $0.20 per million input tokens and $1.25 per million output tokens.

That is not just a pricing detail. It is a product architecture detail.

The best agent systems increasingly do not use one model for everything. They use a large model for planning, final judgment, and hard reasoning, then smaller models for subagents, codebase search, extraction, ranking, classification, and fast supporting tasks.

OpenAI explicitly framed GPT-5.4 mini this way: GPT-5.4 can handle planning and final judgment, while GPT-5.4 mini subagents handle narrower subtasks in parallel.

That pattern is going to matter more every month. The March story is not just GPT-5.4 got smarter. It is that OpenAI’s model stack got easier to route.

MiniMax-M2.7: the best non-OpenAI March release

MiniMax-M2.7 was the most interesting March release outside OpenAI.

The headline is not that M2.7 beat the Tier 1 models. It did not.

The headline is that MiniMax is building toward a different model of progress: models that participate in their own improvement, build agent harnesses, use memory and complex skills, and operate across messy organizational workflows.

MiniMax described M2.7 as its first model to deeply participate in its own evolution. During development, MiniMax says it used the model to update memory, build complex skills, improve reinforcement-learning experiment harnesses, and iterate on its learning process based on experiment results.

That could sound like marketing if the model were weak. But M2.7’s reported results are strong enough to pay attention to. MiniMax reported 56.22% on SWE-Pro, 55.6% on VIBE-Pro, and 57.0% on Terminal Bench 2. It also reported a GDPval-AA Elo of 1495, which MiniMax says was the highest among open-source models in its comparison.

The most interesting parts are practical. MiniMax says M2.7 can handle production debugging, correlate monitoring metrics with deployment timelines, connect to databases to verify root causes, identify missing migrations, and propose non-blocking index creation before submitting a merge request.

That is not normal “can write Python” coding. That is closer to software engineering operations.

M2.7 belongs in Tier 2, not Tier 1. But for teams building coding agents, office agents, or internal automation systems, it became a model worth testing.

MiMo-V2-Pro: Xiaomi enters the serious agent conversation

Xiaomi’s MiMo-V2-Pro was the other important March Tier 2 release.

The model is interesting for three reasons: scale, context, and agent positioning.

Xiaomi says MiMo-V2-Pro has more than 1T total parameters with 42B active, supports up to 1M-token context, and includes a lightweight Multi-Token Prediction layer for fast generation.

It is also built very explicitly for agents. Xiaomi describes MiMo-V2-Pro as a foundation model for real-world agentic workloads and says it is designed to orchestrate complex workflows, drive production engineering tasks, and serve as the “brain” of agent systems.

The benchmark positioning is aggressive. Xiaomi reported MiMo-V2-Pro at #3 globally on PinchBench and #3 globally on ClawEval in its published comparisons, approaching Opus 4.6 on ClawEval and sitting just below Claude Opus 4.6 and MiMo-V2-Omni on PinchBench.

The pricing is also notable. Xiaomi lists MiMo-V2-Pro at $1 per million input tokens and $3 per million output tokens up to 256K context, and $2 input / $6 output from 256K to 1M context.

That makes MiMo-V2-Pro easy to understand: it is not the best model overall, but it is a serious agent model with long context and much lower pricing than the top closed models.

That is exactly the kind of model that can win routing decisions.

Dimension-by-dimension read

AI IQ evaluates models across Abstract Reasoning, Mathematical Reasoning, Programmatic Reasoning, and Academic Reasoning. The composite IQ is the average of those dimensions, with easier or more gameable benchmarks compressed so they cannot dominate the final score.

That framework is useful for March because the models had very different shapes.

Best overall IQ: Gemini 3.1 Pro

Gemini 3.1 Pro remained the overall AI IQ leader in the March window.

GPT-5.4 narrowed the gap and moved OpenAI back into the top cluster, but Gemini 3.1 Pro still had the strongest overall profile by the end of March.

This is why March was not simply “GPT-5.4 wins.” GPT-5.4 was the best new release, but Gemini 3.1 Pro was still the model sitting at the top of the March ranking.

Best March release: GPT-5.4

Among models released in March, GPT-5.4 was the clear winner.

It was the only March release that earned a Tier 1 spot. It improved meaningfully over GPT-5.2, absorbed the Codex gains from GPT-5.3-Codex into a mainline model, and became one of the best models in the world across professional work, computer use, coding, tool use, and academic reasoning.

GPT-5.4 was not a minor checkpoint. It was the March model that changed the top tier.

Best EQ: Opus 4.6

Opus 4.6 remained the strongest EQ model in the March window.

This is one of the reasons Opus 4.6 stayed Tier 1 even after GPT-5.4 launched. AI IQ’s EQ ranking is designed to capture emotional intelligence, conversational judgment, and human preference signals. Those qualities matter more as models move from answering questions to working with people.

For long-running, high-context, user-facing work, Opus 4.6 still had a strong claim.

Best abstract reasoning: Gemini 3.1 Pro

Gemini 3.1 Pro remained the abstract reasoning leader.

GPT-5.4 improved OpenAI’s position substantially, especially compared with GPT-5.2, but Gemini 3.1 Pro was still the strongest model on AI IQ’s abstract reasoning dimension in the March window.

That matters because abstract reasoning is one of the harder dimensions to fake. It is less about memorized facts and more about solving novel patterns.

Best mathematical reasoning: GPT-5.4, with GPT-5.3-Codex still relevant

GPT-5.4 had the strongest March-release math profile.

OpenAI reported GPT-5.4 at 47.6% on FrontierMath Tier 1–3 and 27.1% on FrontierMath Tier 4, with GPT-5.4 Pro scoring 50.0% and 38.0% respectively.

GPT-5.3-Codex remained relevant for technical reasoning, especially where math overlaps with coding, tools, and verification. But GPT-5.4 mattered because it brought those Codex-style strengths into the mainline GPT family.

Best programmatic reasoning: Gemini 3.1 Pro overall; GPT-5.4 as the March mover

Gemini 3.1 Pro still had the strongest overall programmatic reasoning profile in the March window.

But GPT-5.4 was the major new programmatic-reasoning release. OpenAI reported GPT-5.4 at 57.7% on SWE-Bench Pro and 75.1% on Terminal-Bench 2.0. GPT-5.3-Codex still led GPT-5.4 on Terminal-Bench 2.0 in OpenAI’s table, but GPT-5.4 was a much stronger all-around mainline model than GPT-5.2.

MiniMax-M2.7 deserves mention here too. It did not take the top programmatic slot, but its SWE-Pro, VIBE-Pro, Terminal Bench 2, and production-debugging profile made it one of the strongest Tier 2 coding-agent releases of the month.

Best academic reasoning: Gemini 3.1 Pro and GPT-5.4

Academic reasoning was close at the top.

Gemini 3.1 Pro remained extremely strong, but GPT-5.4 gave OpenAI a major new academic-reasoning profile. OpenAI reported GPT-5.4 at 39.8% on Humanity’s Last Exam without tools, 52.1% with tools, 92.8% on GPQA Diamond, and 33.0% on Frontier Science Research.

That puts GPT-5.4 squarely in the top-tier academic-reasoning conversation.

Cost-performance: March was really about routing

The most practical lesson from March is that routing kept getting more important.

GPT-5.4 is expensive but good. OpenAI lists GPT-5.4 API pricing at $2.50 per million input tokens and $15 per million output tokens, compared with GPT-5.2 at $1.75 input and $14 output. GPT-5.4 Pro is far more expensive at $30 input and $180 output.

That pricing is reasonable if GPT-5.4 is doing high-value work. But it makes no sense to use the most expensive model for every subtask in a large agent loop.

That is where March got interesting.

GPT-5.4 mini and nano gave OpenAI cheaper subagent options. MiniMax-M2.7 gave builders a strong Tier 2 coding and office-work model. MiMo-V2-Pro gave builders a 1M-context agent model with much lower listed pricing than Opus-class models.

The better stack after March looked something like this:

Use Gemini 3.1 Pro for the strongest broad reasoning and benchmark profile.

Use GPT-5.4 for professional work, computer use, coding, documents, spreadsheets, presentations, factuality-sensitive tasks, and tool-heavy agents.

Use Opus 4.6 for long-running workflows where trust, EQ, and high-context collaboration matter.

Use GPT-5.4 mini or nano for cheaper OpenAI subagents, extraction, ranking, classification, lightweight coding tasks, and fast supporting work.

Use MiniMax-M2.7 for coding-agent experiments, office-work agents, production-debugging workflows, and multi-agent harnesses.

Use MiMo-V2-Pro for lower-cost 1M-context agent workflows, especially where OpenClaw-style agent behavior matters.

That is the March pattern. The top model matters, but the stack matters more.

What changed from February to March

February was a broad frontier-reset month.

Gemini 3.1 Pro and Opus 4.6 pushed Tier 1 forward. GPT-5.3-Codex made OpenAI harder to compare because it was clearly powerful, but specialized. Grok 4.20 brought xAI back into Tier 2. GLM-5, MiniMax-M2.5, and Qwen3.5-397B made the Chinese cost-performance layer stronger.

March was narrower.

There was one major frontier release: GPT-5.4.

That release mattered because it answered the main question left open by February: what happens when OpenAI folds GPT-5.3-Codex-style coding capability back into a general-purpose GPT model?

The answer was GPT-5.4.

And it was strong enough to make the Tier 1 conversation genuinely three-way again.

What to watch next

The first thing to watch is whether GPT-5.4 becomes the default model for professional agents. OpenAI’s benchmark story is strongest around documents, spreadsheets, presentations, computer use, and tool workflows. The real signal will be whether users feel that improvement in day-to-day work.

The second thing to watch is Gemini. Google skipped March after releasing Gemini 3.1 Pro in February. If Google ships another major update, the top of the ranking could move again quickly.

The third thing to watch is Anthropic. Opus 4.6 remained Tier 1 in March, especially on EQ and long-running workflows, but GPT-5.4 narrowed the professional-work gap. Anthropic’s next release will need to push hard on reliability, coding agents, and long-context work.

The fourth thing to watch is MiniMax-M2.7 adoption. The model’s self-evolution framing is interesting, but adoption will depend on whether developers find it reliable inside real harnesses.

The fifth thing to watch is MiMo-V2-Pro’s agent performance in the wild. Xiaomi’s pricing and 1M-context support are compelling. The question is whether it holds up outside curated agent benchmarks.

Bottom line

March 2026 was quieter than February, but it still mattered.

Gemini 3.1 Pro remained the overall leader in AI IQ’s March ranking.

GPT-5.4 was the best new model of the month and immediately joined Tier 1.

Opus 4.6 stayed Tier 1 because of its EQ, long-running workflow quality, and professional-agent profile.

MiniMax-M2.7 became one of the most interesting Tier 2 models for coding agents, office work, and agent-harness development.

MiMo-V2-Pro gave Xiaomi a serious Tier 2 agent model with 1M context, 42B active parameters, and aggressive pricing.

The practical takeaway is simple: March made the top tier more competitive, but it also made routing more important.

Use the best model when the task is hard.

Use the cheaper model when the task is frequent.

Use the model with the right shape when the workflow is specific.

GPT-5.4 was the headline.

But March’s bigger lesson is that serious AI systems are becoming model stacks, not model choices.